Quick Sort: A Divide-and-Conquer Sorting Algorithm

May 10, 2023Arkar

CS

One more sorting algorithm that I love implementing is quick sorting. I feel clever while sorting that algorithm as well.

On this page

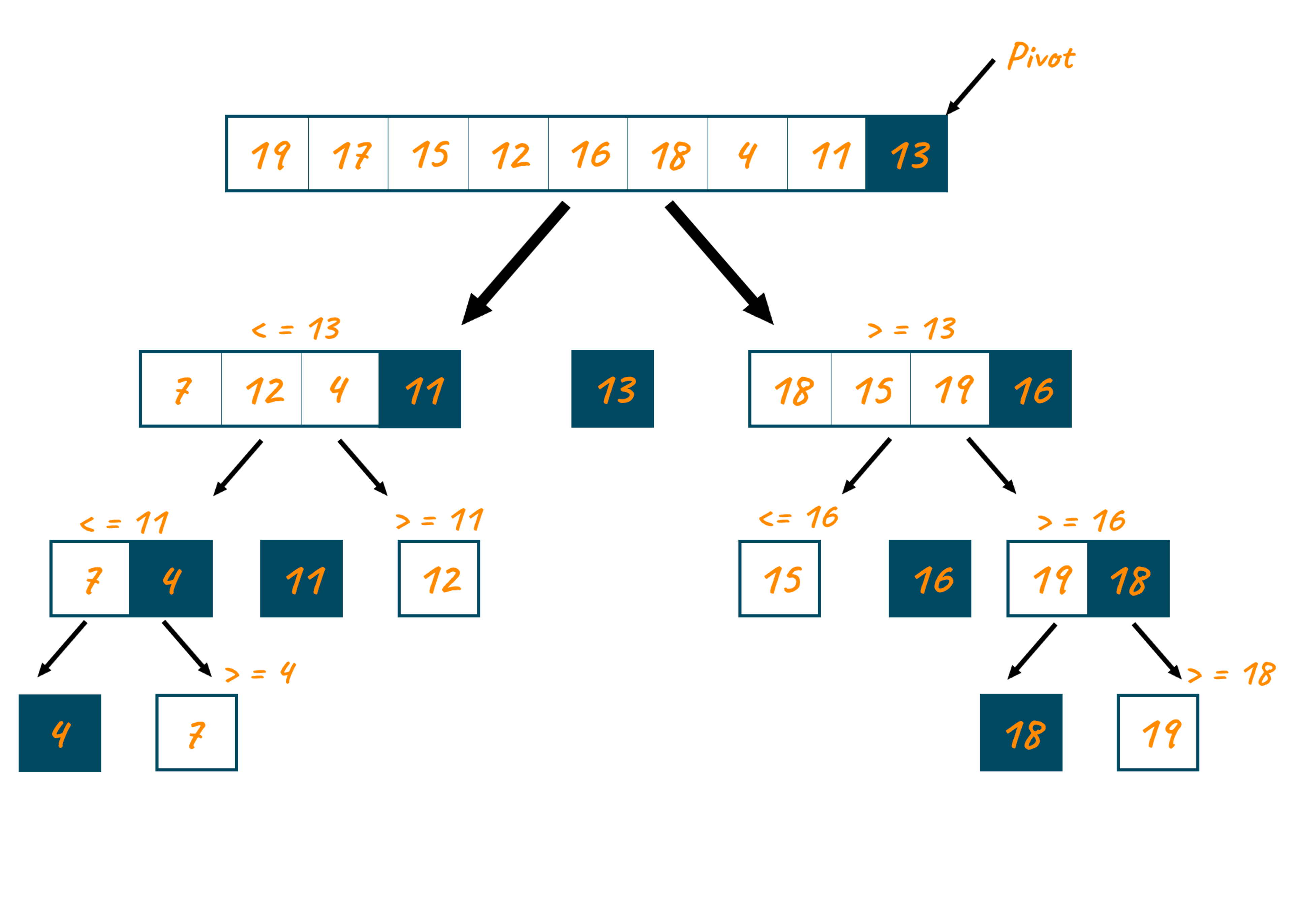

Quick sort is a divide-and-conquer sorting algorithm. It works by selecting a 'pivot' element from the array and partitioning the other elements into two sub-arrays, according to whether they are less than or greater than the pivot. The sub-arrays are then sorted recursively. This can be done in place, requiring small additional amounts of memory to perform the sorting. Then concatenate the two sorted lists and stitch them back together with the pivot. Quick sort is often faster in practice than other algorithms, such as merge sort or heap sort.

One interesting aspect of Quick Sort is that it does not directly compare values. Instead, it relies on partitioning the elements into two sub-arrays based on their relationship to a chosen pivot element. This partitioning is what ultimately leads to the sorted array, as the two sub-arrays are sorted recursively and then concatenated with the pivot.

Choosing the right pivot is often considered the most important step in quick sort. If the pivot is not chosen well, the algorithm can degenerate to O(n^2) time complexity. For example, in a sorted array choosing the first or last element of the array as the pivot will cause quick sort to degenerate to O(n^2) time complexity. So, it's important to choose a pivot that is likely to be near the median value of the array. This can be done by selecting the middle element of the array or by randomly choosing an element from the array.

Dividing and Conquering

Let's say we have an array [5, 2, 1, 8, 4, 7, 6, 3] that we want to sort using quick sort.

First, we select a pivot element from the array. We can choose the last element as the pivot, so our pivot element is 3.

Next, we partition the other elements into two sub-arrays, one with elements less than 3 and one with elements greater than 3. Our two sub-arrays are now [2, 1], [8, 4, 7, 6, 5].

We then recursively sort each sub-array. For the [2, 1] sub-array, we choose 1 as the pivot and partition the sub-array into [] and [2]. We then concatenate the two sorted sub-arrays with the pivot, resulting in [1, 2].

For the [8, 4, 7, 6, 5] sub-array, we choose 5 as the pivot and partition the sub-array into [4], [8, 7, 6]. We then recursively sort each sub-array, which results in [4], [6, 7, 8].

Finally, we concatenate the two sorted sub-arrays with the pivot, resulting in the sorted array [1, 2, 3, 4, 5, 6, 7, 8].

export function quick_sort(arr: number[]) {

if (arr.length < 2) return arr;

let length = arr.length;

let pivot = arr[length - 1];

let leftArr = [];

let rightArr = [];

for (let i = 0; i < length - 1; i++) {

if (arr[i] > pivot) {

rightArr.push(arr[i]);

} else {

leftArr.push(arr[i]);

}

}

let sortedLeft = quick_sort(leftArr);

let sortedRight = quick_sort(rightArr);

return sortedLeft.concat(pivot, sortedRight);

}Time and Space Complexity of Quick Sort

Quick Sort is an algorithm for sorting arrays. It has an average case time complexity of, where n is the number of elements in the array. However, in the worst case, when the pivot is chosen poorly, Quick Sort can take much longer, up to .

To make Quick Sort work better, we can try some tricks to choose the pivot. One way is to select the middle element of the array, while another way is to randomly choose an element from the array. Both of these methods are more likely to give a pivot that is close to the middle value of the array, which helps ensure that Quick Sort runs in time.

| Case | Time Complexity | Space Complexity |

|---|---|---|

| Worst Case | O(n^2) | O(n) |

| Best Case | O(n log n) | O(log n) |

| Average Case | O(n log n) | O(log n) |

Another technique is to compare the first, middle, and last elements of the array and choose the median value as the pivot. This "median of three" method can prevent the worst-case scenario of O(n^2) time complexity that can happen when the pivot is poorly chosen.

The space complexity of Quick Sort is O(log n) in the average case and O(n) in the worst case.

function quickSort(arr:number[]) {

if (arr.length === 0) {

return [];

} else {

const pivotIndex = Math.floor(arr.length / 2);

const pivot = arr[pivotIndex];

const less = [];

const greater = [];

for (let i = 0; i < arr.length; i++) {

if (i === pivotIndex) {

continue;

}

if (arr[i] < pivot) {

less.push(arr[i]);

} else {

greater.push(arr[i]);

}

}

return quickSort(less).concat(pivot, quickSort(greater));

}

}Quick Sort can be unstable, meaning that it may not preserve the order of equal elements. For example, if we had an array [3, 2, 3, 1] and chose the first element as the pivot, we would end up with the sorted array [1, 2, 3, 3]. However, the two 3s are not in their original order. To make Quick Sort stable, we can use an algorithm called "stable Quick Sort," which is similar to regular Quick Sort but uses an extra step to ensure that equal elements are sorted in their original order. Quick sort is can be combined with other sorting algorithms to make them more efficient. For instance, quick sort can be used to sort small sub-arrays in merge sort. In this case, it's called "quick sort for merge sort." The basic idea is that if the sub-array to be sorted is smaller than a particular size, we use quick sort instead of merge sort. This can speed up merge sort when sorting small sub-arrays.

Subscribe to my newsletter

Join my web development newsletter to receive the latest updates, tips, and trends directly in your inbox.

On this page